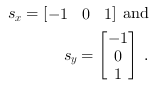

The image gradient orientation and magnitude is calculated for each pixel in the image. The gradient filter kernels

in x- and y-direction are:

in x- and y-direction are:

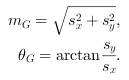

The gradient magnitude  and orientation

and orientation

is then calculated as:

is then calculated as:

In dependence on their orientation the calculated gradients are then assigned to a certain number of orientation bins.

The vote for the bin is a

function of the gradient magnitude. As Dalal and Triggs found out, a splitting of the orientation into 9 bins over

give the best results when using the HOG features

for human detection methods [Dal05]. In the VA example designs "HOG_9Bins_HistogramMax.va" and "HOG_9Bins_Histogram.va" we have implemented such binning.

In addition in the example "HOG_4Bins_HistogramMax.va" we perform a splitting into 4 bins over

give the best results when using the HOG features

for human detection methods [Dal05]. In the VA example designs "HOG_9Bins_HistogramMax.va" and "HOG_9Bins_Histogram.va" we have implemented such binning.

In addition in the example "HOG_4Bins_HistogramMax.va" we perform a splitting into 4 bins over

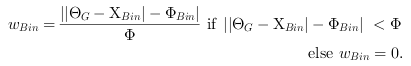

. The assignment or weighting

. The assignment or weighting

of a certain orientation

of a certain orientation

to a bin (with orientation

to a bin (with orientation

) is done

via interpolation:

) is done

via interpolation:

Here  is the size of angle steps between the bins.

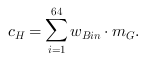

A single bin column

is the size of angle steps between the bins.

A single bin column  of the Histogram of Oriented Gradients (each for a region of 8x8 pixels) is then calculated as:

of the Histogram of Oriented Gradients (each for a region of 8x8 pixels) is then calculated as:

The histograms for each cell of 8x8 pixels are then grouped in blocks of 2x2 cells size to cover local variances in luminance

or contrast. The blocks have an overlap of

.

Using the HOG descriptor for object recognition purposes, block normalization (algorithm see

[Dal05]) improves the performance by a factor of

.

Using the HOG descriptor for object recognition purposes, block normalization (algorithm see

[Dal05]) improves the performance by a factor of  .

Block normalization is omitted in the current design examples. In the designs "HOG_4Bins_HistogramMax.va" and "HOG_9Bins_HistogramMax.va",

the maximum histogram orientation is

forwarded to DMA, whereas in "HOG_9Bins_Histogram.va" the complete Histogram of Oriented Gradients is sent to PC.

The designs introduced in the following can easily be adapted to the special purpose of the user. That is, a certain amount

and step size of the orientation binning or a block

normalization according to [Dal05] can be implemented in addition.

.

Block normalization is omitted in the current design examples. In the designs "HOG_4Bins_HistogramMax.va" and "HOG_9Bins_HistogramMax.va",

the maximum histogram orientation is

forwarded to DMA, whereas in "HOG_9Bins_Histogram.va" the complete Histogram of Oriented Gradients is sent to PC.

The designs introduced in the following can easily be adapted to the special purpose of the user. That is, a certain amount

and step size of the orientation binning or a block

normalization according to [Dal05] can be implemented in addition.

Prev

Prev